Data Science Process: Everything You Need to Know

Data science is a buzzword everyone must have heard in the recent age of technology. It is a complete field associated with “Big Data”, that carries a lot of potential for the future. For instance, data science, apart from just working on large structured and unstructured data, involves different sets of technologies such as machine learning and artificial intelligence.

The vastness of the field can be determined by the market value. As per recent studies, the global data science platform market size is expected to reach USD 695 billion by 2030, growing at the CAGR (Compound Annual Growth Rate) of 27.6%.

With the evolution of technology, data science processes are also getting advanced.

This write-up will cover the essentials of data science, the complete data science process and why it is significant in the modern business development landscape.

What is the Data Science Process?

The data science process is a systematic approach to solving real-life problems by evaluating existing data.

Data scientists carry out the process to analyse, visualise and modelling large datasets, crafting new information. The data science process enables data scientists to convert information into actionable insights that lead to a meaningful future outcome for the business.

For instance, the data science process lays down a structured framework that articulates the problem as a question, decides the solution using the existing datasets, and then presents those outcomes to the stakeholders.

Data Science Life Cycle

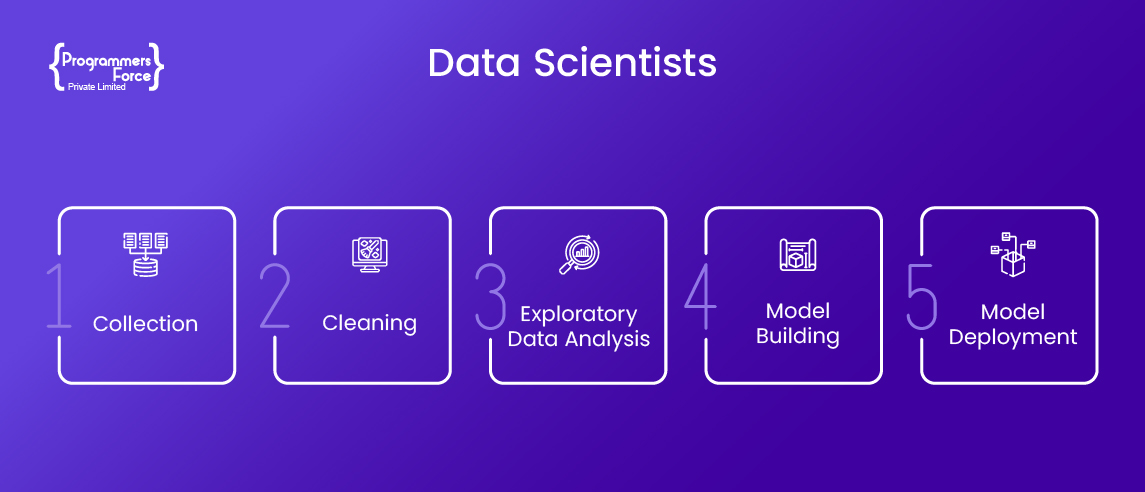

The Data Science life cycle comprises different processes that play a core part in regulating the effective workflow. Here are the operations most businesses use to implement data science strategies and solutions.

Frame the Issue

The first step in any data science process is understanding the problem. Once getting a grasp on the issue, data scientists can frame the problem in front of the potential stakeholders to get started on building a solution.

This step is essential to create an effective model that can have a positive impact on the organisation. When looking for the actual problem, businesses should engage in several questions like who are the potential consumers of the product being made.

Collect Data

This phase is also known as the data preparation step. The more precise your data is, the better your results will be. Many businesses store the data they have in CRM (Customer Relationship Management) systems. The data can be easily analysed by exporting it to more advanced data collection tools.

Clean Data

There may be inconsistencies in the data, such as missing values, blank columns, or an improper data format. In order to model, data must first be cleaned, examined, and prepared.

The team will plan out a model, figuring out what steps to take and what tools to use so that you can visualise the connections between your input variables. In order to plan a model, various statistical formulas and visualisation tools are used. Data cleaning software like SQL Analysis Services, R, and SAS/Access is used for this.

Data cleaning is essential to trace out the correct information and refine duplicate or missing values. This phase also finds out the differences in values, range errors and invalid entries.

Exploratory Data Analysis (EDA)

This phase is crucial for any data science service as construction of the actual model begins at this point. It is in this context that the Data Scientist will share training and test datasets with the rest of the team. The training data set undergoes various analyses, including association, classification, and clustering. Once the model is ready, it will be put to the test against a “testing” dataset.

In-Depth Analysis (Model Building)

This is where the data scientists hand off the fully baselined model along with all of the reports, code, and technical docs. After the model has been rigorously tested, it is put into a live production setting.

The phase prepares a predictive model that can compare with the average client’s previous models that are underperforming. There are several aspects in this phase that many developers prefer to create an accurate model which can predict the consumers of products and services.

At the end of this phase, the data scientists can combine the qualitative and quantitative data for further action. Data science operation at this moment gives proper demographics, capable of solving problems.

Communicate Results

At this point, the results are disseminated to those who have a stake in the outcome. Inputs from the model allow the team to determine whether or not the project was successful.

This phase decides the solution to the problem presented in the first step. Proper communication at this point leads to action and makes a strong solution, with clarity and objectives.

Data Science Process Framework

A lot of data science frameworks that you should know as a data scientist. All these solutions aim to guide an effective workflow and work well in many use cases for driving better data insights.

One of the most used frameworks in data science processes is TensorFlow, Keras, Theano and many others.

All these frameworks are end-to-end platforms featuring flexible, and comprehensive tools and libraries that help data science professionals build data-based solutions easily. These data science frameworks carry a set of libraries that improves functionalities and data mining operations.

How Can Programmers Force Help?

Understanding raw data and taking meaningful information out of it is no easy feat for everyone. Data scientists dig in and understand the breadth and depth of complex problems to come up with mind-boggling solutions. That’s exactly what Programmers Force is here to help you with.

Our awesome team of data scientists can easily convert your raw, unstructured data, into meaningful pieces of document. From model building to collecting, cleaning, processing, and analysing the data, get in touch now to know how our data science engineers can assist you.